DeepInfra raises $107M Series B to scale the inference cloud — read the announcement

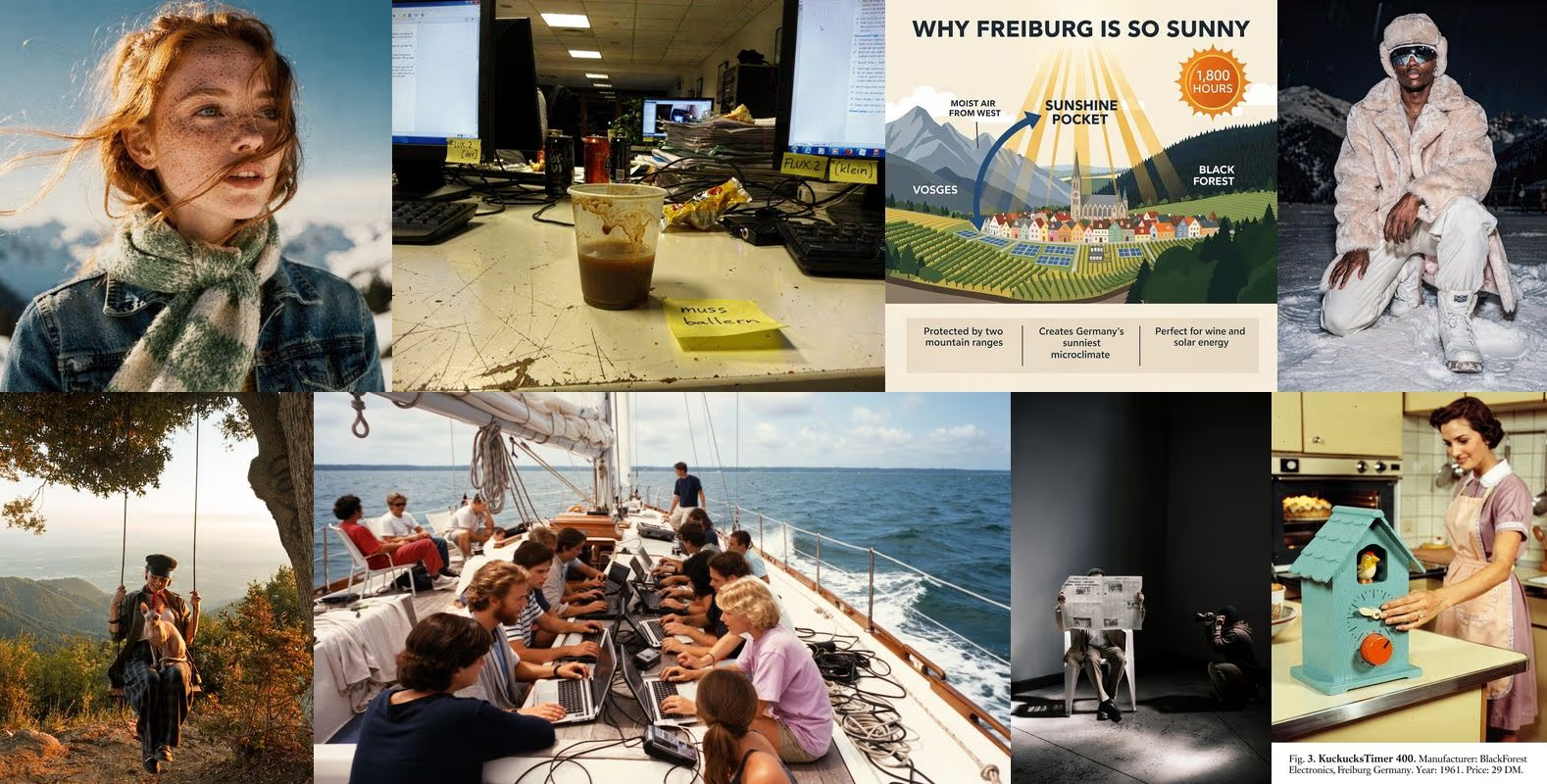

DeepInfra is excited to support FLUX.2 from day zero, bringing the newest visual intelligence model from Black Forest Labs to our platform at launch. We make it straightforward for developers, creators, and enterprises to run the model with high performance, transparent pricing, and an API designed for productivity.

FLUX.2 introduces a new level of visual intelligence, moving beyond traditional pixel-only diffusion approaches. The model interprets lighting, physical relationships, and spatial structure with greater accuracy, producing images with higher realism, stronger coherence, and consistent character or product identity even in complex scenes.

FLUX.2 Model Overview

Character and Product Control

- Multi-reference input for consistent character identity

- Precise product placement in complex scenes

- Style transfer that keeps core visual features intact

Resolution and Quality

- Strong grounding in real-world lighting, physics, and spatial logic

- Higher detail quality approaching real photography

- Flexible output up to 4MP in any aspect ratio

- Reliable results from inputs as low as 400x400 px

- Expand and shrink operations for intelligent pixel addition or removal

Brand and Design Fidelity

- Exact HEX-based color matching

- Reliable text rendering for UI, typography, and infographics

- Structured prompting with JSON, pose guidance, and other controls

More Controls

- Strong prompt accuracy for complex instructions

- Support for thirty-two thousand characters

- Designed to balance high quality with sub-ten-second generation times

Why Run FLUX.2 on DeepInfra

DeepInfra is built for teams that need strong performance, transparent pricing, and dependable infrastructure. These strengths directly benefit FLUX.2 users.

Fast and Consistent Performance

Our NVIDIA-optimized infrastructure is designed specifically for diffusion workloads, delivering low latency, stable throughput, and smooth scaling during peak creative or production demand.

Competitive, Usage-Based Pricing

DeepInfra maintains predictable costs with simple usage-based billing. You can explore the model, run high-volume projects, or scale pipelines without financial overhead or long-term commitments.

Developer-First API

Our OpenAI-compatible API integrates easily into existing systems. There is no complex setup or infrastructure management, allowing you to move quickly from testing to deployment.

Enterprise-Grade Privacy

With our zero-retention policy, your inputs, outputs, and user data remain completely private. DeepInfra is SOC 2 and ISO 27001 certified, following industry best practices in information security and privacy.

Getting Started with FLUX.2 on DeepInfra

You can try FLUX.2 today through our model page or explore our documentation for integration examples, pricing, and workflow guides. The combination of FLUX.2's visual intelligence and DeepInfra's scalable infrastructure makes next-generation image creation available to everyone, from individual creators to enterprise teams. We're excited to support what you build next.

GLM-4.6 API: Get fast first tokens at the best $/M from Deepinfra's API - Deep Infra<p>GLM-4.6 is a high-capacity, “reasoning”-tuned model that shows up in coding copilots, long-context RAG, and multi-tool agent loops. With this class of workload, provider infrastructure determines perceived speed (first-token time), tail stability, and your unit economics. Using ArtificialAnalysis (AA) provider charts for GLM-4.6 (Reasoning), DeepInfra (FP8) pairs a sub-second Time-to-First-Token (TTFT) (0.51 s) with the […]</p>

GLM-4.6 API: Get fast first tokens at the best $/M from Deepinfra's API - Deep Infra<p>GLM-4.6 is a high-capacity, “reasoning”-tuned model that shows up in coding copilots, long-context RAG, and multi-tool agent loops. With this class of workload, provider infrastructure determines perceived speed (first-token time), tail stability, and your unit economics. Using ArtificialAnalysis (AA) provider charts for GLM-4.6 (Reasoning), DeepInfra (FP8) pairs a sub-second Time-to-First-Token (TTFT) (0.51 s) with the […]</p>

DeepSeek V4 Pro (Max) API Benchmarks: Latency, Throughput & Cost Analysis<p>About DeepSeek V4 Pro DeepSeek V4 Pro is a Mixture-of-Experts (MoE) language model with 1.6 trillion total parameters and 49 billion activated parameters, supporting a 1 million token context window. Designed for advanced reasoning, coding, and long-horizon agent workflows, it represents the fourth generation of DeepSeek’s flagship open-weight models. The model introduces a hybrid attention […]</p>

DeepSeek V4 Pro (Max) API Benchmarks: Latency, Throughput & Cost Analysis<p>About DeepSeek V4 Pro DeepSeek V4 Pro is a Mixture-of-Experts (MoE) language model with 1.6 trillion total parameters and 49 billion activated parameters, supporting a 1 million token context window. Designed for advanced reasoning, coding, and long-horizon agent workflows, it represents the fourth generation of DeepSeek’s flagship open-weight models. The model introduces a hybrid attention […]</p>

NVIDIA Nemotron 3 Nano 30B API Benchmarks: Latency & Cost<p>About NVIDIA Nemotron 3 Nano 30B A3B NVIDIA Nemotron 3 Nano 30B A3B is a large language model trained from scratch by NVIDIA, designed as a unified model for both reasoning and non-reasoning tasks. It is part of the Nemotron 3 family — NVIDIA’s most efficient family of open models, built for agentic AI applications. […]</p>

NVIDIA Nemotron 3 Nano 30B API Benchmarks: Latency & Cost<p>About NVIDIA Nemotron 3 Nano 30B A3B NVIDIA Nemotron 3 Nano 30B A3B is a large language model trained from scratch by NVIDIA, designed as a unified model for both reasoning and non-reasoning tasks. It is part of the Nemotron 3 family — NVIDIA’s most efficient family of open models, built for agentic AI applications. […]</p>

© 2026 DeepInfra. All rights reserved.