DeepInfra raises $107M Series B to scale the inference cloud — read the announcement

DeepInfra is serving NVIDIA Cosmos 3, the first open world foundation model for physical AI that reasons before it generates, from day zero of its release. Available as two variants—Cosmos 3 Nano and Cosmos 3 Super—these models give developers a cost-efficient foundation for building robots, autonomous vehicles, simulation workflows, and synthetic data generation at scale.

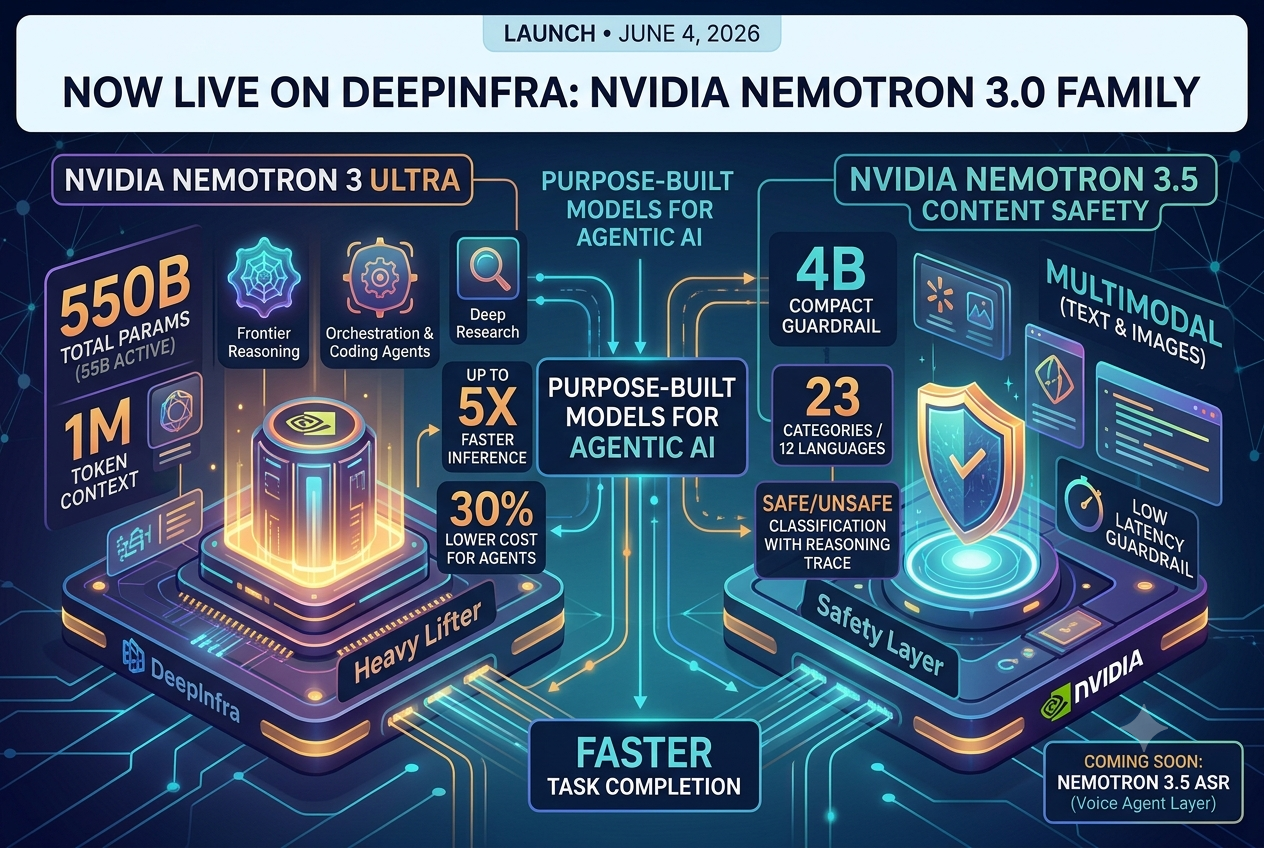

Published on 2026.06.04 by Yessen KanapinNemotron 3 Ultra, 3.5 Content Safety and ASR models are now live on DeepInfra platform.

Published on 2026.06.04 by Yessen KanapinNemotron 3 Ultra, 3.5 Content Safety and ASR models are now live on DeepInfra platform.Nemotron 3 Ultra and Nemotron 3.5 Content Safety are live on DeepInfra as of today. Here's what they are and why we think they're worth your attention.

Published on 2026.05.26 by DeepInfraOpenClaw Use Cases That Deliver Real ROI

Published on 2026.05.26 by DeepInfraOpenClaw Use Cases That Deliver Real ROIAn OpenClaw agent that reads your email, opens pull requests, and watches a server is only useful if running it doesn’t feel like leaving the meter running. That’s the quiet constraint behind every OpenClaw use cases discussion. Most of the workflows people show off (morning briefings, multi-agent research, ambient monitoring) only make sense if each […]

Published on 2026.05.26 by DeepInfraOpenClaw Cost Optimization: Cut AI API Costs by 90%

Published on 2026.05.26 by DeepInfraOpenClaw Cost Optimization: Cut AI API Costs by 90%A single ask in an OpenClaw session can cost more than a full evening of casual ChatGPT use. Ask your agent something simple, like which calendar event clashes with your flight, and the request that hits the API carries far more than your 12-token question. It also carries your SOUL.md, the tool schemas registered on […]

Published on 2026.05.26 by DeepInfraHow Mixture of Experts Models Changed LLM Economics

Published on 2026.05.26 by DeepInfraHow Mixture of Experts Models Changed LLM EconomicsEvery open-weight model that has closed the gap with GPT-5.5 and Claude Opus 4.7 this year has one thing in common. DeepSeek V4-Pro: 1.6 trillion parameters, 49 billion active per token. Kimi K2.6: 1 trillion parameters, 32 billion active. GLM-5.1: 744 billion parameters, 40 billion active. MiniMax M2.7: large total parameter count, 10 billion active […]

Published on 2026.05.26 by DeepInfraOpenClaw Security: Prevent Prompt Injection & Supply Chain Attacks

Published on 2026.05.26 by DeepInfraOpenClaw Security: Prevent Prompt Injection & Supply Chain AttacksIn early 2026, the China’s Ministry of Industry and Information Technology issued an emergency warning about an AI agent runtime that had quietly grown to 135,000 GitHub stars. By mid-February, security researchers were tracking a coordinated campaign called ClawHavoc. The Moltbook breach had exposed customer email archives from 41 enterprises. OpenClaw’s maintainers had shipped three […]

Published on 2026.05.26 by DeepInfraOpen-Source vs Closed-Source AI Models: Is the Gap Worth It?

Published on 2026.05.26 by DeepInfraOpen-Source vs Closed-Source AI Models: Is the Gap Worth It?The Artificial Analysis Intelligence Index sits at a ceiling of 57. Three frontier models — Claude Opus 4.7, Gemini 3.1 Pro Preview, and GPT-5.5 — all land in that band. Meanwhile, four open-weight models released between February and April 2026 now score 50 or above on the same index. A year ago, the best open-weight […]

© 2026 DeepInfra. All rights reserved.