DeepInfra raises $107M Series B to scale the inference cloud — read the announcement

Double exposure is a photography technique that combines multiple images into a single frame, creating a dreamlike and artistic effect. With the advent of AI image generation, we can now create stunning double exposure art in minutes using LoRA models. In this guide, we'll walk through how to use the Flux Double Exposure Magic LoRA from CivitAI with DeepInfra's deployment platform.

What You'll Need

- A CivitAI account (free)

- A DeepInfra account (free)

Set Up a LoRA model

- Log in to your DeepInfra account

- Navigate to the Deployments section

- Click the "New Deployment" button in the top right corner

- Select "LoRA text to image" from the options

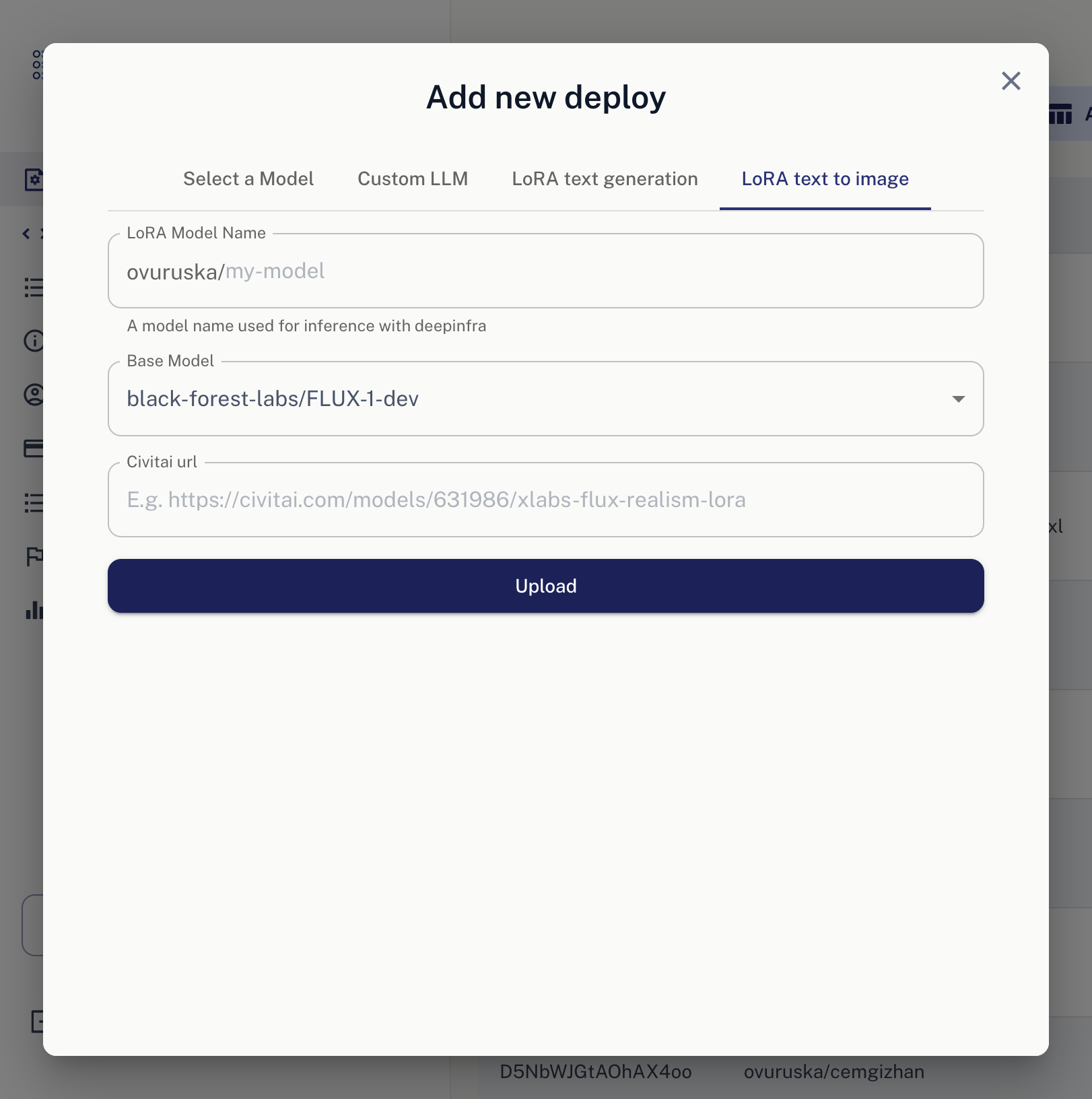

Once you navigate to this section, you will see a screen like this:

5. Write your preferred model name.

6. We'll use FLUX Dev for this LoRA. You can keep it as it is.

7. Add the following CivitAI URL: https://civitai.com/models/715497/flux-double-exposure-magic?modelVersionId=859666

8. Click "Upload" button, and that's it. VOILA!

5. Write your preferred model name.

6. We'll use FLUX Dev for this LoRA. You can keep it as it is.

7. Add the following CivitAI URL: https://civitai.com/models/715497/flux-double-exposure-magic?modelVersionId=859666

8. Click "Upload" button, and that's it. VOILA!

Once LoRA processing has completed, you should navigate to

http://deepinfra.com/<your_name>/<lora_name>

When you have navigated, you should view our classical dashboard, but with your LoRA name.

An Example: Cyberpunk Double Exposure

Now let's create some stunning visuals... Let's break down this stunning example:

bo-exposure, double exposure, cyberpunk city, robot face

Key Takeaway ⚠️

Notice how we use BOTH bo-exposure and double exposure. This combination is crucial - using both terms together gives you the best double exposure effect.

More tutorials are on the way. See you in the next one 👋

Best API Providers for DeepSeek V4 in 2026<p>DeepSeek V4 is available across a range of hosted API providers, each with different pricing, performance, and deployment trade-offs. The model comes in two variants: V4 Pro, a 1.6 trillion total parameter Mixture-of-Experts model with 49 billion active parameters and a 1M token context window, and V4 Flash, a lighter 284B total parameter variant built […]</p>

Best API Providers for DeepSeek V4 in 2026<p>DeepSeek V4 is available across a range of hosted API providers, each with different pricing, performance, and deployment trade-offs. The model comes in two variants: V4 Pro, a 1.6 trillion total parameter Mixture-of-Experts model with 49 billion active parameters and a 1M token context window, and V4 Flash, a lighter 284B total parameter variant built […]</p>

GLM-4.6 API: Get fast first tokens at the best $/M from Deepinfra's API - Deep Infra<p>GLM-4.6 is a high-capacity, “reasoning”-tuned model that shows up in coding copilots, long-context RAG, and multi-tool agent loops. With this class of workload, provider infrastructure determines perceived speed (first-token time), tail stability, and your unit economics. Using ArtificialAnalysis (AA) provider charts for GLM-4.6 (Reasoning), DeepInfra (FP8) pairs a sub-second Time-to-First-Token (TTFT) (0.51 s) with the […]</p>

GLM-4.6 API: Get fast first tokens at the best $/M from Deepinfra's API - Deep Infra<p>GLM-4.6 is a high-capacity, “reasoning”-tuned model that shows up in coding copilots, long-context RAG, and multi-tool agent loops. With this class of workload, provider infrastructure determines perceived speed (first-token time), tail stability, and your unit economics. Using ArtificialAnalysis (AA) provider charts for GLM-4.6 (Reasoning), DeepInfra (FP8) pairs a sub-second Time-to-First-Token (TTFT) (0.51 s) with the […]</p>

FLUX.1-dev Guide: Mastering Text-to-Image AI Prompts for Stunning and Consistent VisualsLearn how to craft compelling prompts for FLUX.1-dev to create stunning images.

FLUX.1-dev Guide: Mastering Text-to-Image AI Prompts for Stunning and Consistent VisualsLearn how to craft compelling prompts for FLUX.1-dev to create stunning images.

© 2026 DeepInfra. All rights reserved.