DeepInfra raises $107M Series B to scale the inference cloud — read the announcement

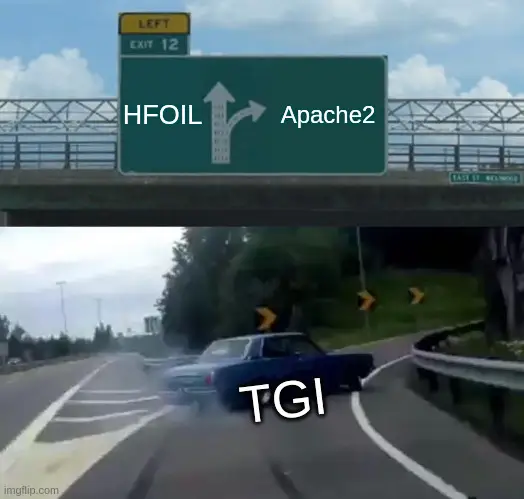

The text generation inference open source project by huggingface looked like a promising framework for serving large language models (LLM). However, huggingface announced that they will change the license of code with version v1.0.0. While the previous license Apache 2.0 was permissive, the new one is restrictive for our use cases.

Forking the project

We decided to fork the project and continue to maintain it under the Apache 2.0 license. We will continue to contribute to the project and keep it up to date. We will accept pull requests from the community, and we will keep the project truly open source and free to use.

Here is a link to the code: https://github.com/deepinfra/text-generation-inference

We hope that in time a community of other developers and organizations that want to keep this project truly open source will form around it.

License changes mid-flight

Sadly it is becoming more and more common for popular open source projects to change their license after they gain some traction. This happened with MongoDB, Grafana, ElasticSearch, and many others. As a developer, when you decide to adopt a particular open source project, you start investing time and effort into using it. You build your application around it, and you start depending on it. Then, suddenly, the license changes, and you might be forced to find an alternative.

Imagine if meta changes the license of pytorch. Or if tomorrow huggingface decides to change the license of transformers in a similar way to prohibit commercial use.

We believe that the changing of the license of open source projects mid-flight is a unfriendly move towards the community.

If you need any help, just reach out to us on our Discord server.

Chat with books using DeepInfra and LlamaIndexAs DeepInfra, we are excited to announce our integration with LlamaIndex.

LlamaIndex is a powerful library that allows you to index and search documents

using various language models and embeddings. In this blog post, we will show

you how to chat with books using DeepInfra and LlamaIndex.

We will ...

Chat with books using DeepInfra and LlamaIndexAs DeepInfra, we are excited to announce our integration with LlamaIndex.

LlamaIndex is a powerful library that allows you to index and search documents

using various language models and embeddings. In this blog post, we will show

you how to chat with books using DeepInfra and LlamaIndex.

We will ... Build an OCR-Powered PDF Reader & Summarizer with DeepInfra (Kimi K2)<p>This guide walks you from zero to working: you’ll learn what OCR is (and why PDFs can be tricky), how to turn any PDF—including those with screenshots of tables—into text, and how to let an LLM do the heavy lifting to clean OCR noise, reconstruct tables, and summarize the document. We’ll use DeepInfra’s OpenAI-compatible API […]</p>

Build an OCR-Powered PDF Reader & Summarizer with DeepInfra (Kimi K2)<p>This guide walks you from zero to working: you’ll learn what OCR is (and why PDFs can be tricky), how to turn any PDF—including those with screenshots of tables—into text, and how to let an LLM do the heavy lifting to clean OCR noise, reconstruct tables, and summarize the document. We’ll use DeepInfra’s OpenAI-compatible API […]</p>

Nemotron 3 Nano vs GPT-OSS-20B: Performance, Benchmarks & DeepInfra Results<p>The open-source LLM landscape is becoming increasingly diverse, with models optimized for reasoning, throughput, cost-efficiency, and real-world agentic applications. Two models that stand out in this new generation are NVIDIA’s Nemotron 3 Nano and OpenAI’s GPT-OSS-20B, both of which offer strong performance while remaining openly available and deployable across cloud and edge systems. Although both […]</p>

Nemotron 3 Nano vs GPT-OSS-20B: Performance, Benchmarks & DeepInfra Results<p>The open-source LLM landscape is becoming increasingly diverse, with models optimized for reasoning, throughput, cost-efficiency, and real-world agentic applications. Two models that stand out in this new generation are NVIDIA’s Nemotron 3 Nano and OpenAI’s GPT-OSS-20B, both of which offer strong performance while remaining openly available and deployable across cloud and edge systems. Although both […]</p>

© 2026 DeepInfra. All rights reserved.