We use essential cookies to make our site work. With your consent, we may also use non-essential cookies to improve user experience and analyze website traffic…

DeepInfra raises $107M Series B to scale the inference cloud — read the announcement

Did you just finetune your favorite model and are wondering where to run it? Well, we have you covered. Simple API and predictable pricing.

Put your model on huggingface

Use a private repo, if you wish, we don't mind. Create a hf access token just for the repo for better security.

Create custom deployment

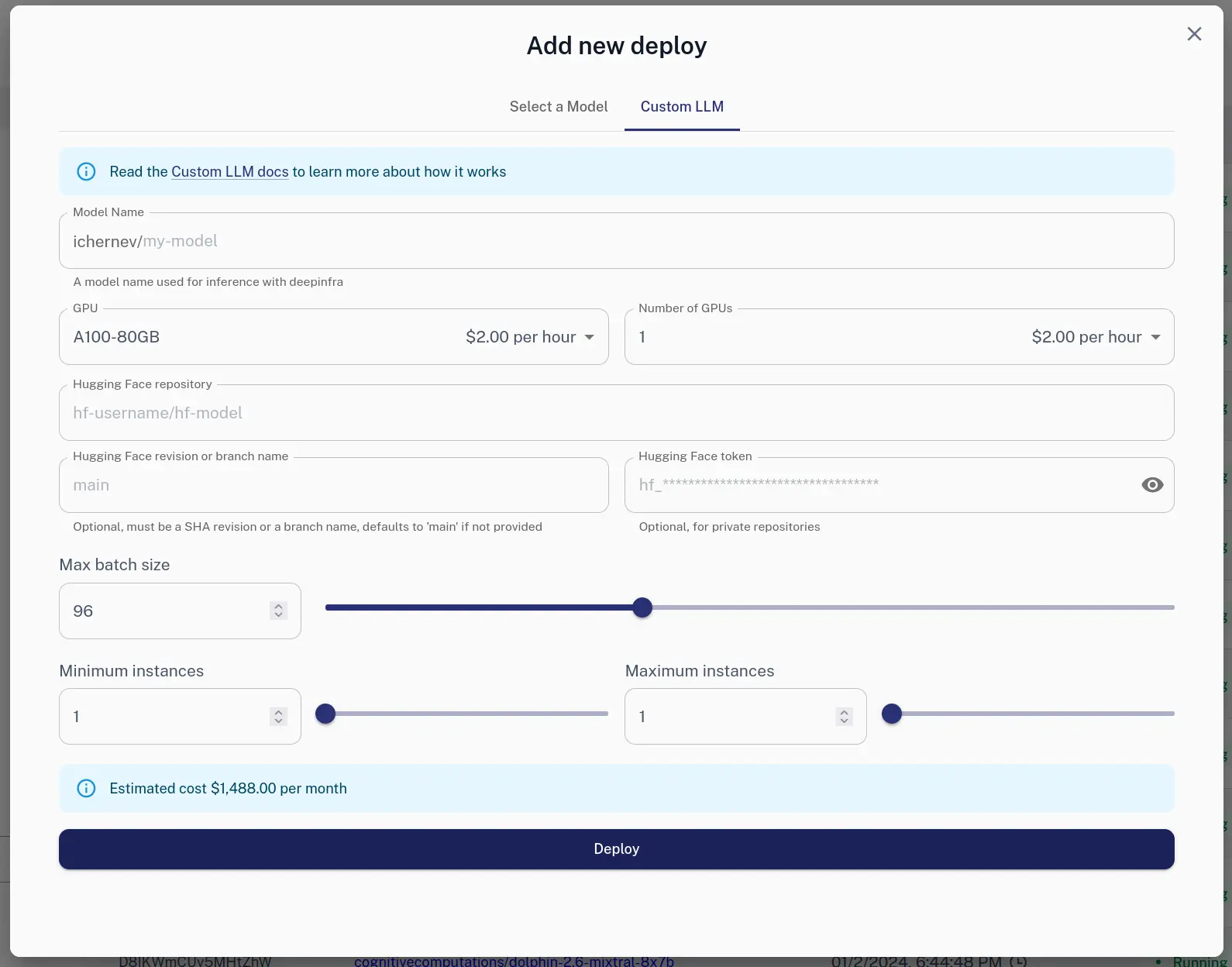

Via Web

You can use the Web UI to create a new deployment.

Via HTTP

We also offer HTTP API:

curl -X POST https://api.deepinfra.com/deploy/llm -d '{

"model_name": "test-model",

"gpu": "A100-80GB",

"num_gpus": 2,

"max_batch_size": 64,

"hf": {

"repo": "meta-llama/Llama-2-7b-chat-hf"

},

"settings": {

"min_instances": 1,

"max_instances": 1,

}

}' -H 'Content-Type: application/json' \

-H "Authorization: Bearer YOUR_API_KEY"

Use it

curl -X POST \

-d '{"input": "Hello"}' \

-H 'Content-Type: application/json' \

-H "Authorization: Bearer YOUR_API_KEY" \

'https://api.deepinfra.com/v1/inference/github-username/di-model-name'

For in depth tutorial check Custom LLM Docs.

Related articles DeepSeek V4 Pro Pricing Guide 2026: Pricing, Providers & Cost Comparison<p>DeepSeek V4 Pro matters because it pushes two levers developers actually care about at the same time: open-weight availability and a very competitive provider market. As of the research here, DeepSeek V4 Pro Max is tracked across six API providers, and five of them cluster at the same blended price of $2.17 per 1M tokens […]</p>

DeepSeek V4 Pro Pricing Guide 2026: Pricing, Providers & Cost Comparison<p>DeepSeek V4 Pro matters because it pushes two levers developers actually care about at the same time: open-weight availability and a very competitive provider market. As of the research here, DeepSeek V4 Pro Max is tracked across six API providers, and five of them cluster at the same blended price of $2.17 per 1M tokens […]</p>

Getting StartedGetting an API Key

To use DeepInfra's services, you'll need an API key. You can get one by signing up on our platform.

Sign up or log in to your DeepInfra account at deepinfra.com

Navigate to the Dashboard and select API Keys

Create a new ...

Getting StartedGetting an API Key

To use DeepInfra's services, you'll need an API key. You can get one by signing up on our platform.

Sign up or log in to your DeepInfra account at deepinfra.com

Navigate to the Dashboard and select API Keys

Create a new ... Build a Streaming Chat Backend in 10 Minutes<p>When large language models move from demos into real systems, expectations change. The goal is no longer to produce clever text, but to deliver predictable latency, responsive behavior, and reliable infrastructure characteristics. In chat-based systems, especially, how fast a response starts often matters more than how fast it finishes. This is where token streaming becomes […]</p>

Build a Streaming Chat Backend in 10 Minutes<p>When large language models move from demos into real systems, expectations change. The goal is no longer to produce clever text, but to deliver predictable latency, responsive behavior, and reliable infrastructure characteristics. In chat-based systems, especially, how fast a response starts often matters more than how fast it finishes. This is where token streaming becomes […]</p>

DeepSeek V4 Pro Pricing Guide 2026: Pricing, Providers & Cost Comparison<p>DeepSeek V4 Pro matters because it pushes two levers developers actually care about at the same time: open-weight availability and a very competitive provider market. As of the research here, DeepSeek V4 Pro Max is tracked across six API providers, and five of them cluster at the same blended price of $2.17 per 1M tokens […]</p>

DeepSeek V4 Pro Pricing Guide 2026: Pricing, Providers & Cost Comparison<p>DeepSeek V4 Pro matters because it pushes two levers developers actually care about at the same time: open-weight availability and a very competitive provider market. As of the research here, DeepSeek V4 Pro Max is tracked across six API providers, and five of them cluster at the same blended price of $2.17 per 1M tokens […]</p>

Getting StartedGetting an API Key

To use DeepInfra's services, you'll need an API key. You can get one by signing up on our platform.

Sign up or log in to your DeepInfra account at deepinfra.com

Navigate to the Dashboard and select API Keys

Create a new ...

Getting StartedGetting an API Key

To use DeepInfra's services, you'll need an API key. You can get one by signing up on our platform.

Sign up or log in to your DeepInfra account at deepinfra.com

Navigate to the Dashboard and select API Keys

Create a new ... Build a Streaming Chat Backend in 10 Minutes<p>When large language models move from demos into real systems, expectations change. The goal is no longer to produce clever text, but to deliver predictable latency, responsive behavior, and reliable infrastructure characteristics. In chat-based systems, especially, how fast a response starts often matters more than how fast it finishes. This is where token streaming becomes […]</p>

Build a Streaming Chat Backend in 10 Minutes<p>When large language models move from demos into real systems, expectations change. The goal is no longer to produce clever text, but to deliver predictable latency, responsive behavior, and reliable infrastructure characteristics. In chat-based systems, especially, how fast a response starts often matters more than how fast it finishes. This is where token streaming becomes […]</p>

© 2026 DeepInfra. All rights reserved.